Idea's & Links to Articles

LocalAI - Open Source OpenAI alternative

LocalAI is the free, Open Source OpenAI alternative. LocalAI act as a drop-in replacement REST API that’s compatible with OpenAI API specifications for local inferencing. It allows you to run LLMs, generate images, audio (and not only) locally or on-prem with consumer grade hardware, supporting multiple model families that are compatible with the ggml format. Does not require GPU.

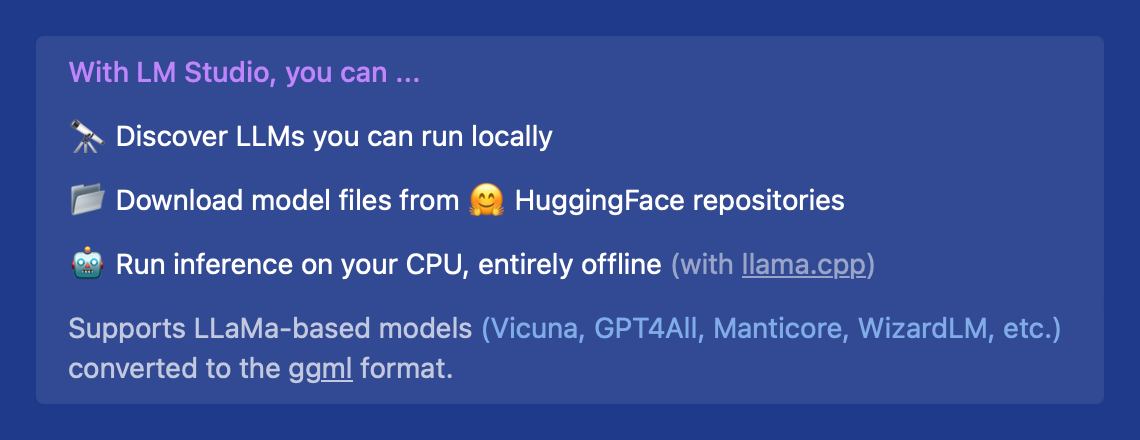

LM Studio

🤖 - Run LLMs on your laptop, entirely offline

👾 - Use models through the in-app Chat UI or an OpenAI compatible local server

📂 - Download any compatible model files from HuggingFace 🤗 repositories

🔭 - Discover new & noteworthy LLMs in the app's home page

How is it going? I have playing with Llama (Meta's LLM) that supposedly has memory for conversations. Though, it doesn't know what time it is when asked. I haven't ported to run on Jetson Orin Nano yet, but that is the plan..

GPT Chat on your computer.

We need to do it on our own computer so as not to use third-party services. For example: https://huggingface.co/

OpenChat https://huggingface.co/openchat/openchat_3.5

demo: https://openchat.team/

DeepSeek Coder https://github.com/deepseek-ai/deepseek-coder

demo: https://chat.deepseek.com/coder

LLaVA: Large Language and Vision Assistant https://github.com/haotian-liu/LLaVA

Thanks for sharing Alex very intresting RND you post up.